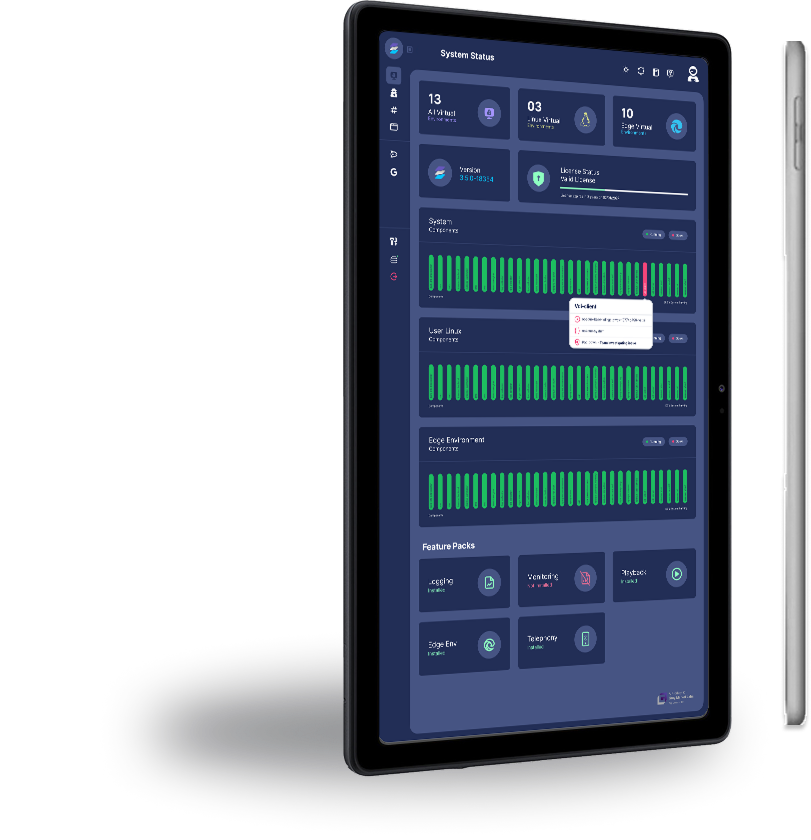

High-risk work rarely follows a single path. Investigators reopen cases, analysts revisit sources, developers evaluate tools, and operators need environments that launch with the right configuration every time. Replica 4.5 helps teams turn those recurring patterns into governed workflows. This release introduces a cleaner user experience, reusable Enclave templates, default URL and DNS logging for container Virtual Environments, stronger usage visibility, and more automated egress operations.

The result is a platform that is simpler for users to navigate and easier for admins to scale without giving up the isolation, governance, and audit-ready visibility required for sensitive work. Read on to see what’s new in our 4.5 release.

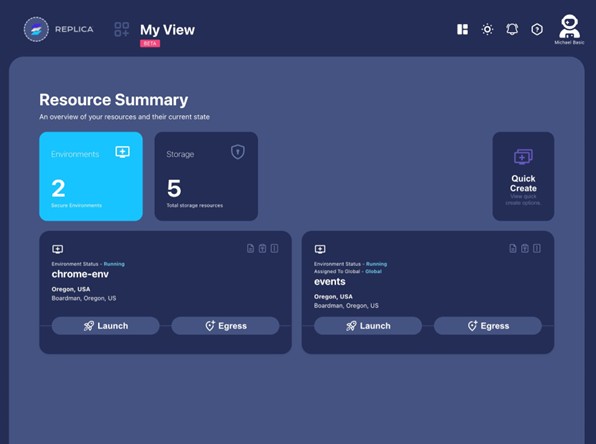

Workflow-First Entry Experience

Replica 4.5 reduces the number of decisions users need to make before they can begin secure work.

- Template-based setup and workflow-specific state handling help teams launch environments with the right configuration for the job. A role-differentiated dashboard reduces noise by focusing the experience around the environments and actions each user is most likely to need. The new Simplified My View experience prioritizes a user’s interests and frequent interactions, helping teams spend less time navigating and more time working.

- File movement is also more intuitive. Users can now drag and drop files anywhere on the Virtual Environment to upload them. Files that exceed size limits are blocked before upload begins, and the upload panel can be minimized so larger uploads can continue in the background.

- Replica 4.5 also adds GitBook AI documentation support in Webkit, giving users quick answers from Replica documentation without requiring them to leave the platform.

Why it matters: Teams should not have to rebuild the same setup logic every time they start an investigation, test a tool, or resume research. 4.5 makes the approved path more visible, more guided, and easier to follow.

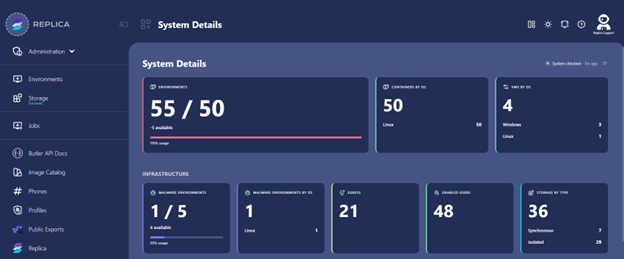

Standardized Network Activity Records

Replica 4.5 makes network activity easier to capture and review across container Virtual Environments.

- URL logging and DNS logging are now enabled by default for all container VEs, removing the separate URL Logging checkbox from the creation flow. This makes logging behavior more consistent and reduces the chance that a key activity record is missed because a setting was skipped.

- Network review is also consolidated. Instead of separate actions for DNS query logs, URL logs, and packet capture, users can now use View Network Traffic as the central place to review traffic-related information. Packet capture files are written to the VE desktop, and Wireshark is installed for review.

- Admins also gain access to consumption metrics, including environment counts by type and other platform utilization signals. These views help teams understand how Replica is being used, where adoption is growing, and which workflows may need more capacity or standardization.

Why it matters: Audit readiness depends on consistency. Default logging and consolidated traffic review help admins and operators get to the right records faster, with fewer workflow-specific exceptions to explain.

Reusable Enclaves for Persistent Work

Replica 4.5 expands Enclaves so teams can preserve context, standardize setup, and carry recurring work forward across sessions.

- For work that needs to persist over time, Storage Enclaves help keep workload data and project context available across investigations, research, secure development, malware analysis, AI/tool evaluation, and other workflows where teams need to return without rebuilding from scratch.

- For work that needs to repeat, Worker Enclaves support workflow and task execution, helping teams package recurring steps with the right resources in place.

- Enclaves can also include reusable Virtual Environment templates that define the right image, egress, storage, and launch settings for a workflow. Those settings can be pre-set for standardized use or left configurable at launch when teams need flexibility.

- Replica 4.5 also adds multiple Storage Enclave support and synchronous Storage Enclave support for Windows and Linux VMs, extending persistent workflow behavior across more environment types.

Why it matters: Many teams repeat the same secure-work patterns across cases, projects, regions, or tools. Enclaves help standardize those patterns, so teams can launch with the right configuration, preserve the right context, and carry recurring work forward without rebuilding from scratch.

More Resilient Egress Operations

Replica 4.5 improves the foundation for region-sensitive and attribution-sensitive work.

- A new egress manager helps automate point-of-presence requests, credentials, connection information, and failover when an egress connection drops. Egress options now include commercial VPN partners, existing AWS or Azure VMs, and new AWS egress deployments in available regions. Customer clusters are expected to pick up new egress automatically.

- Router behavior is also being simplified. Replica 4.5 moves router connections to static configuration to reduce DHCP-related slowness, complexity, and failure points. The expected user impact is faster VE startup, faster egress switching, and better resilience if egress drops.

- This release also adds Windows 11 on Azure and REMnux on Proxmox, expanding image support for customer workflows, including malware analysis.

Why it matters: Network behavior is part of the workflow. More automated egress management and steadier routing reduce manual coordination for admins while helping users maintain continuity as environments, regions, or infrastructure needs change.

Release Highlights

Replica 4.5 helps teams standardize secure work across users, workflows, and infrastructure.

- User experience: Simplified My View, role-differentiated dashboards, template-based setup, drag-and-drop uploads, minimized upload panel, and GitBook AI documentation support.

- Activity and usage visibility: URL and DNS logging by default for container VEs, consolidated View Network Traffic action, PCAP files written to the VE desktop, Wireshark installed for review, and admin consumption metrics.

- Enclaves and workflows: Reusable VE templates, persistent storage, workflow resources, multiple Enclave types, multiple Storage Enclave support, and synchronous storage support for Windows and Linux VMs.

- Networking and egress: Egress manager, automated failover support, expanded egress options, automatic customer cluster pickup, static router connections, faster egress switching, Windows 11 on Azure, and REMnux on Proxmox.

Replica 4.5 is built for teams that need secure environments to be repeatable, observable, and operationally consistent. Whether you are supporting investigations, research, secure development, malware analysis, or region-sensitive workflows, this release helps turn recurring work into governed execution.

Read the full release notes or request a demo to see Replica 4.5 in action.